Understand at a glance what is happening with your DAGs and tasks, quickly pinpoint task failures, and drill down into root causes with Airflow’s intuitive grid view.

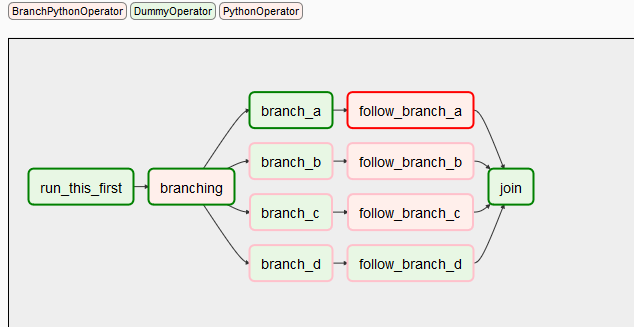

A web interface helps manage the state of your workflows. Airflow’s extensible Python framework enables you to build workflows connecting with virtually any technology. Pull the latest version of any Airflow Provider at anytime, or follow an easy contribution process to build your own and install it as a Python package. Apache Airflow is an open-source platform for developing, scheduling, and monitoring batch-oriented workflows. Apache Airflow is a way to programmatically author, schedule and monitor your data pipelines using Python. Seamless integration into data and developer tools like dbt, Airflow, Dagster and Prefect, as well as an intuitive UI for analysts to get started without. Apache Solr, Elastic Search, Apache Kafka, Apache Airflow, Apache Spark. Apache Airflow is an open-source tool used to programmatically author, schedule, and monitor sequences of processes and tasks referred to as 'workflows.' With Amazon MWAA, you can use Airflow and Python to create workflows without having to manage the underlying infrastructure for scalability, availability, and security. Airflow can run anythingit is completely agnostic to what you are running. Airflow uses Python to create workflows that can be easily scheduled and monitored. First developed by Airbnb, it is now under the Apache Software Foundation. Make the most of the pod_override parameter for easy 1:1 overrides and the new yaml pod_template_file, which replaces configs set in airflow.cfg. The open source standard for workflow orchestration. Apache OFBiz and worked with clients including United Airlines, IEEE Computer. Apache Airflow is an open-source platform for authoring, scheduling and monitoring data and computing workflows. Task Groups don't affect task execution behavior and do not limit parallelism. Replace SubDAGs with a new way to group tasks in the Airflow UI. Includes support for custom XCom backends. Pass information between tasks with clean, efficient code that's abstracted from the task dependency layer. Leverage dynamic tasks, sensors, and deferrable operators to create robust, event-driven workflows. Run data quality checks, track data lineage, and work with. Chain dynamic tasks together to simplify and accelerate ETL and ELT processing. Schedule, automate, and monitor data pipelines using Apache Airflow. Spin up as many parallel tasks as you need at runtime in response to the outputs of upstream tasks. Read more about the Airflow 2 scheduler.īuild programmatic services around your Airflow environment with Airflow's new API, now featuring a robust permissions framework.Įasily accommodate long-running tasks with deferrable operators and triggers that run tasks asynchronously, freeing up worker slots and making efficient use of resources. Launch Scheduler replicas to increase task throughput and ensure high-availability. Expect faster performance with near-zero task latency.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed